AI and Surveillance Concerns: Where Nonprofits Must Draw the Line

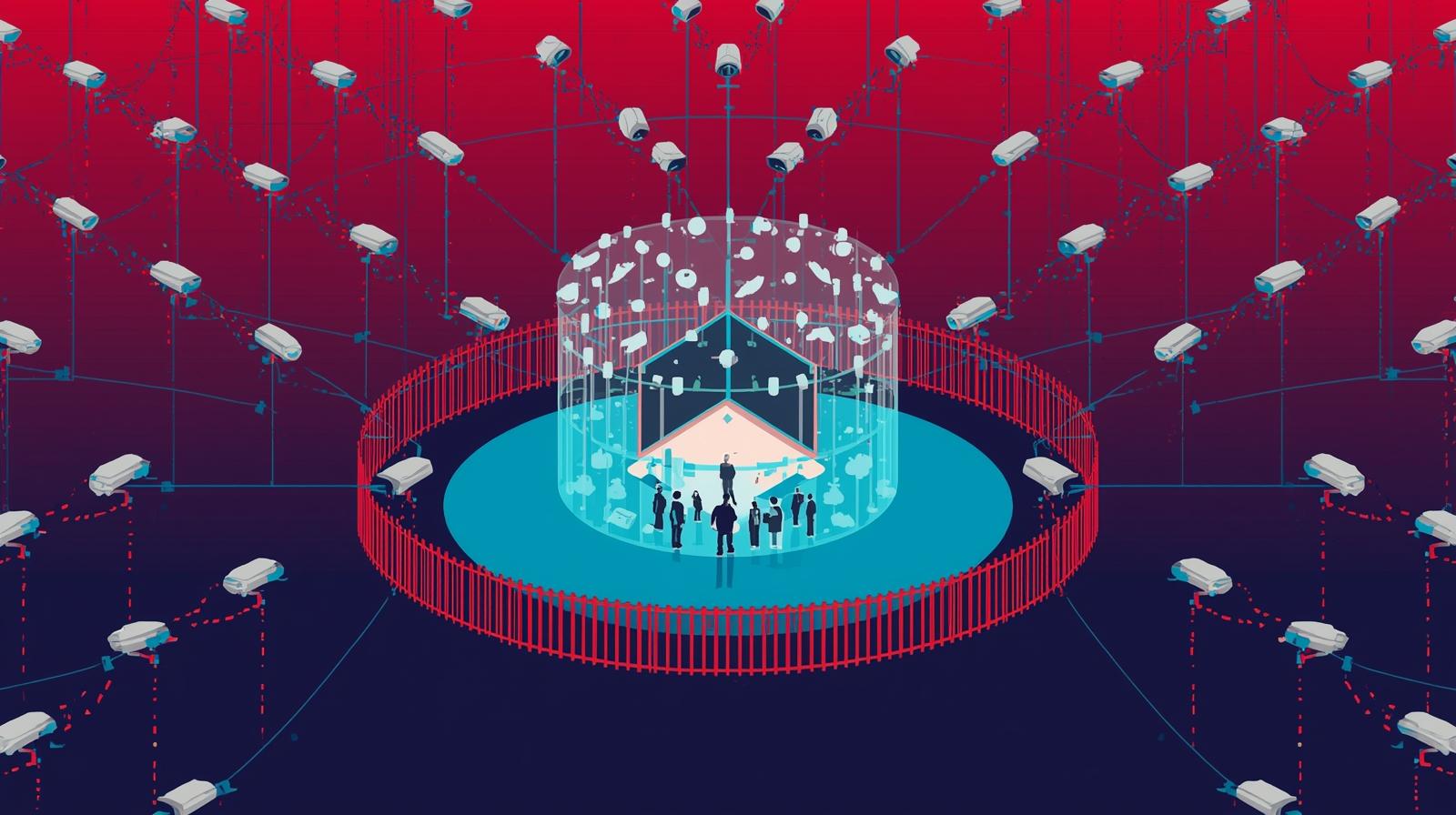

The same AI tools that help nonprofits serve communities more effectively can also track, monitor, and profile the people those organizations exist to protect. Knowing where to draw the line is not a technical question. It is a mission question.

A shelter for domestic violence survivors installs an AI-powered security camera system that uses facial recognition to identify visitors. The intent is safety: flagging individuals with restraining orders before they enter the building. But within weeks, the system is also logging every visit by every survivor, creating a detailed record of when each person comes and goes, how often they visit, and which staff members they meet with. That data sits on a cloud server managed by a third-party vendor whose privacy policies run to 47 pages and whose data-sharing agreements with law enforcement are buried in the fine print.

This is not a hypothetical. It is the kind of scenario playing out across the nonprofit sector as AI-powered surveillance tools become cheaper, more accessible, and more capable. The technology that promises to make organizations more efficient and their programs more effective is, in many cases, the same technology that can track, profile, and monitor the very people nonprofits exist to serve. The question facing nonprofit leaders in 2026 is not whether to use AI. It is where to draw the line between helpful data collection and surveillance that undermines your mission.

The distinction matters because nonprofits occupy a unique position. Unlike commercial businesses, they serve populations that often have no meaningful alternative. A person experiencing homelessness cannot take their business elsewhere if a shelter's AI system makes them uncomfortable. A family receiving food assistance cannot opt out of data collection without opting out of meals. This power imbalance is what transforms routine data practices into potential surveillance, and it is what makes the ethical stakes for nonprofits fundamentally different from those facing the private sector.

This article examines the specific surveillance risks that AI creates for nonprofits, from beneficiary tracking to employee monitoring to donor analytics. It offers a practical framework for identifying when data collection crosses the line and provides concrete steps for building organizational policies that protect the people your mission serves. If you have already worked through an AI ethics checklist for your organization, this article goes deeper into one of the most consequential areas that checklist should address.

Understanding AI Surveillance in the Nonprofit Context

Surveillance, in the context of nonprofit AI use, is not limited to cameras and tracking software. It includes any systematic collection and analysis of personal data that goes beyond what is necessary for service delivery. AI amplifies surveillance risk because it can extract patterns and insights from data that would be invisible to human observers, turning innocuous information into detailed behavioral profiles without anyone making a conscious decision to surveil.

Consider how AI surveillance can manifest across different areas of nonprofit operations. A youth mentoring program might use an AI platform to track communication patterns between mentors and mentees, ostensibly to ensure program quality. But the system also analyzes the emotional tone of messages, flags "concerning" language patterns, and builds behavioral profiles of teenagers who never consented to psychological assessment. A community health clinic might deploy AI scheduling software that optimizes appointment slots, but the platform also tracks which patients cancel frequently, correlates cancellation patterns with demographic data, and creates risk scores that influence how staff interact with patients before they walk through the door.

The challenge is that many of these capabilities are bundled into tools marketed as operational efficiency improvements. The surveillance functionality is not a separate feature you choose to enable. It is baked into the product, running quietly in the background, generating data that the nonprofit may not even realize it is collecting. This passive accumulation of surveillance data is, in many ways, more dangerous than intentional monitoring because it happens without deliberate decision-making and therefore without ethical review.

Beneficiary Tracking

AI tools that track service usage, location patterns, behavioral indicators, and engagement frequency can create detailed profiles of vulnerable individuals without their knowledge or meaningful consent.

- Facial recognition at service locations

- Automated sentiment analysis of client interactions

- Predictive risk scoring based on behavioral data

Staff and Volunteer Monitoring

AI productivity tools can cross from helpful to invasive when they track keystrokes, analyze communication patterns, score employee engagement, or create performance profiles from passive observation.

- Keystroke logging and activity tracking

- Email and chat sentiment analysis

- Automated performance scoring from passive data

Where Surveillance Crosses the Line

The line between data collection and surveillance is not always obvious, but it becomes clearer when you examine the power dynamics involved. Data collection serves the person whose data is being collected. Surveillance serves the organization collecting it. When a food bank records which items a family selects so it can stock the pantry with things people actually want, that is data collection in service of beneficiaries. When the same food bank uses AI to analyze selection patterns, predict which families are "at risk" of needing services for longer than expected, and shares those predictions with funders, that is surveillance dressed up as program evaluation.

The power imbalance between nonprofits and beneficiaries makes this distinction especially important. People who depend on your services for basic needs, whether that is food, shelter, healthcare, legal aid, or education, cannot freely consent to data collection because refusing means losing access to services. This is not hypothetical. Research in social services consistently shows that clients agree to data collection they are uncomfortable with because they fear losing access to programs. When AI enters this equation, it magnifies the problem because the scope and depth of data analysis expands far beyond what clients imagine when they sign an intake form.

The Netherlands' SyRI system provides a stark warning. This government algorithm analyzed data from multiple social service agencies to predict fraud among benefit recipients. It disproportionately flagged immigrant and low-income communities, leading to wrongful accusations, benefit termination, and devastating personal consequences for more than 30,000 families. A Dutch court ultimately ruled the system violated human rights. The lesson for nonprofits is direct: when you combine AI with data from vulnerable populations, the potential for harm scales with the power of the technology, not with your intentions.

Warning Signs: When Data Collection Becomes Surveillance

- You are collecting data that beneficiaries would be surprised to learn you have

- Data is being used for purposes beyond what was explained at the time of collection

- AI is generating inferences about individuals that they never directly shared

- Refusing data collection means losing access to services

- Third-party vendors have access to beneficiary data with unclear usage terms

- Data retention policies allow indefinite storage of personal information

The Beneficiary Data Dilemma

Nonprofits face a genuine tension between accountability and privacy that AI makes significantly more complicated. Funders increasingly expect granular, real-time data about program outcomes. Government grants require detailed reporting on who is being served and how. Impact measurement frameworks push organizations toward collecting more data, more often, with more precision. AI makes all of this technically possible in ways that were unimaginable five years ago.

The problem is that what funders want and what beneficiaries need are not always aligned. A foundation might want to know the demographic breakdown, service utilization patterns, and long-term outcome trajectories of every person your organization serves. Meeting that request with AI-powered analytics is technically straightforward. But doing so can mean building detailed profiles of individuals who came to you for help during the most vulnerable moments of their lives, profiles that sit in databases, flow through vendor systems, and persist long after the person has moved on.

This is what researchers have termed "surveillance philanthropy," the phenomenon where the drive for accountability and impact measurement transforms the relationship between nonprofits and beneficiaries into one of observer and observed. AI accelerates this dynamic because it can extract insights from data that would be too costly or time-consuming to analyze manually. The question every nonprofit leader should ask is whether the accountability demands you are meeting actually require the level of individual-level tracking that AI makes possible, or whether aggregate and anonymized data would serve the same purpose.

Organizations that have thought carefully about informed consent for AI in their programs have found that there are almost always ways to satisfy funder requirements without building surveillance infrastructure. The key is having those conversations proactively, both internally and with funders, rather than defaulting to maximum data collection because the technology makes it easy.

Funder Pressure

Increasing demands for granular impact data push nonprofits toward more invasive data collection. Push back by demonstrating that aggregate data and anonymized analytics can meet reporting requirements without individual-level tracking.

Consent Gaps

Intake forms signed under duress do not constitute meaningful consent. When someone needs your services to survive, agreeing to data collection is not a free choice. Design consent processes that account for this power dynamic.

Data Persistence

AI systems retain and learn from data long after the original purpose has been served. Establish clear data retention limits and automatic deletion schedules, especially for sensitive beneficiary information.

Employee and Volunteer Monitoring

The surveillance conversation in nonprofits is not limited to beneficiaries. AI-powered employee monitoring tools have become increasingly common, and the nonprofit sector is not immune to their appeal. With tight budgets and high workloads, the promise of AI systems that can measure productivity, identify disengagement, and optimize workflows is understandably attractive. But the line between helpful workforce analytics and invasive surveillance is thinner than most leaders realize.

Consider what modern employee monitoring tools can do. AI platforms can track keystrokes per minute, log application usage, capture screenshots at intervals, analyze email and chat sentiment, score employee "engagement" based on digital behavior patterns, and flag workers whose activity patterns deviate from algorithmic norms. Some systems use webcam analysis to determine whether employees are "attentive" during virtual meetings. Others analyze communication networks to identify employees who are "isolated" or whose interaction patterns suggest they may be planning to leave.

For nonprofits, deploying these tools creates a contradiction that is difficult to resolve. Organizations whose missions center on dignity, empowerment, and human rights cannot credibly advocate for these values while subjecting their own staff to algorithmic surveillance. The hypocrisy is visible and corrosive, particularly for organizations working in social justice, human services, or community development. Staff who feel monitored rather than trusted become less engaged, less creative, and more likely to leave, the opposite of what the monitoring was supposed to achieve. Organizations working to overcome staff resistance to AI will find that surveillance-style monitoring is one of the fastest ways to destroy the trust needed for successful AI adoption.

The alternative is not to avoid all workforce analytics. There are legitimate uses for AI in understanding workload distribution, identifying burnout patterns at the team level (not the individual level), and streamlining administrative processes. The key distinction is between aggregate insights that help leaders make better organizational decisions and individual-level monitoring that treats staff as subjects of observation. If the data you are collecting about employees is granular enough to identify specific individuals and their behavior patterns, you have crossed from analytics into surveillance.

Surveillance Philanthropy: When Donor Analytics Cross the Line

The surveillance question extends to how nonprofits use AI to monitor and analyze their donors. AI-powered donor analytics have become sophisticated tools for fundraising, capable of analyzing social media activity, wealth indicators, giving patterns, website behavior, email engagement, and even publicly available financial records to build comprehensive donor profiles. Much of this is standard practice in modern fundraising. But AI pushes the boundaries of what is possible, and some of those boundaries should not be pushed.

When AI systems track a donor's website behavior to predict their likelihood of giving, that falls within reasonable relationship management. When those systems correlate website behavior with social media activity, public records, estimated net worth, political donations, and personal life events to create a psychological profile that predicts optimal ask timing and amounts, the practice starts to feel less like relationship management and more like manipulation. The donor has not consented to having their public information aggregated into a behavioral profile, and most would be uncomfortable if they knew the depth of analysis being performed.

The risk for nonprofits is both ethical and practical. If donors learn that your organization is using AI to build detailed psychological profiles from their online behavior, the trust damage can be severe and lasting. Transparency about AI use in fundraising is not just an ethical obligation. It is a strategic necessity. Organizations that use AI for knowledge management should ensure that donor data management practices are included in their governance frameworks and that the line between helpful personalization and invasive profiling is clearly defined.

Donor Analytics: Where to Draw the Line

Guidelines for ethical use of AI in donor relationship management

Acceptable Practices

- Analyzing giving history to personalize communications

- Using engagement data from your own platforms to segment audiences

- Predictive modeling with data donors knowingly provided

- AI-assisted content personalization based on stated interests

Concerning Practices

- Scraping social media to build psychological profiles

- Correlating public records to estimate net worth without disclosure

- Using AI to predict optimal emotional states for solicitation

- Sharing donor behavioral data with third-party AI platforms

Building an Anti-Surveillance Framework

Resisting surveillance creep requires more than good intentions. It requires organizational infrastructure. The following framework provides concrete steps for establishing and maintaining boundaries around AI-powered data collection. These are not theoretical principles. They are operational practices that your organization can implement regardless of size or technical capacity.

The foundation of any anti-surveillance framework is the principle of data minimization: collect only what you need, for as long as you need it, and nothing more. AI tools often default to maximum data collection because more data improves model performance. Your organization needs to override these defaults deliberately. This means reviewing the data collection settings of every AI tool you use, disabling features that collect data beyond your stated purpose, and establishing retention policies that require automatic deletion of personal data after a defined period.

Organizations that have successfully built AI champions within their teams can leverage those individuals to conduct regular surveillance audits, reviewing what data is being collected, how it is being used, and whether it aligns with the organization's stated values. These audits should happen quarterly at minimum and should include input from beneficiaries, staff, and board members.

Data Minimization

- Audit every AI tool for data collection beyond stated purpose

- Disable optional analytics and tracking features by default

- Set automatic data retention limits and deletion schedules

- Require justification for any new data collection beyond baseline

Purpose Limitation

- Document the specific purpose for each data point collected

- Prohibit using data for purposes beyond original collection intent

- Require separate consent for AI-driven analysis of existing data

- Ban secondary uses of beneficiary data for fundraising analytics

Consent Protocols

- Design opt-in rather than opt-out consent for AI data use

- Ensure declining consent does not reduce access to services

- Explain AI data use in plain language at a sixth-grade reading level

- Provide multilingual consent materials where needed

Regular Audits

- Conduct quarterly reviews of all AI data collection practices

- Include beneficiary representatives in audit processes

- Review vendor data practices and subcontractor agreements

- Document and address any scope creep in data collection

Drawing the Line: A Decision Framework

Before deploying any AI tool that collects, analyzes, or generates insights from personal data, run it through these five questions. If the answer to any of them raises concerns, pause deployment until you have addressed the issue. This is not a compliance exercise. It is a values exercise that protects the people your organization exists to serve.

1. The Newspaper Test

If a journalist wrote a story about how your organization uses this tool, including the data it collects and the inferences it draws, would your beneficiaries, donors, and community feel that your practices are consistent with your mission? If the answer is anything other than a confident yes, redesign the implementation before proceeding.

2. The Power Reversal Test

If the people being monitored or analyzed by this tool were instead monitoring your organization's leadership with the same level of scrutiny, would you be comfortable? If the surveillance feels different when the power dynamic is reversed, that discomfort is telling you something important about the ethical implications.

3. The Minimum Viable Data Test

Could you achieve the same outcome with less data, less granularity, or less personal identification? If aggregate data would serve the same purpose as individual-level tracking, use aggregate data. If anonymized data would work as well as identified data, anonymize it. Default to the least invasive option that still achieves your legitimate purpose.

4. The Consent Authenticity Test

Can the people whose data you are collecting realistically say no without losing access to services, employment, or support? If not, their consent is not freely given, and you should treat the data collection with the same caution you would apply to any action taken without genuine consent.

5. The Vendor Transparency Test

Do you know exactly what happens to the data once it enters the vendor's system? Can you confirm that it is not being used to train models, shared with third parties, or retained beyond your control? If you cannot answer these questions with certainty, you do not have sufficient oversight to deploy the tool responsibly.

Practical Steps for Immediate Action

Building an anti-surveillance posture does not require a complete technology overhaul. It requires deliberate choices, applied consistently. The following steps can be implemented by any nonprofit, regardless of technical capacity, and they create immediate protection for the people your organization serves.

Inventory every AI tool your organization uses

Document what data each tool collects, where it is stored, who has access, and how long it is retained. Include tools that staff may have adopted independently without formal approval.

Review vendor data practices

Read the privacy policies and terms of service for every AI vendor you use. Specifically check whether your data is used for model training, shared with third parties, or subject to law enforcement requests without your notification.

Disable default tracking features

Most AI platforms enable maximum data collection by default. Go through each tool's settings and disable analytics, tracking, and data-sharing features that are not essential to your stated purpose.

Establish a data retention policy

Set maximum retention periods for all personal data, with automatic deletion triggers. Beneficiary data should be retained only as long as required for service delivery and compliance, not indefinitely "in case we need it."

Create a beneficiary data rights statement

Publish a clear, plain-language statement explaining what data you collect, why you collect it, how AI uses it, and what rights beneficiaries have regarding their information. Make this available in every language your clients speak.

Train staff on surveillance awareness

Ensure all team members understand the difference between helpful data collection and surveillance, and empower them to flag concerns when they see AI tools being used in ways that feel inconsistent with organizational values.

Conclusion

The temptation to collect more data because AI makes it possible is real, and it is growing. Every AI vendor promises better outcomes through deeper analytics, more comprehensive tracking, and more granular insights. Some of those promises are genuine. But for nonprofits, the question is never simply "can we collect this data?" It is "should we, given who we serve and the trust they place in us?"

The organizations that will maintain public trust in the age of AI are those that choose restraint when technology offers excess. They will collect what they need and delete what they do not. They will design consent processes that respect the power dynamics inherent in service delivery. They will audit their AI tools for surveillance capabilities they never asked for. And they will be willing to push back on funders, vendors, and board members who equate more data with better outcomes.

Drawing the line on AI surveillance is not about rejecting technology. It is about ensuring that the tools you adopt are consistent with the values that define your mission. The people you serve trusted you before AI, and maintaining that trust in a world of ubiquitous data collection requires active, deliberate, and ongoing commitment to the principle that collecting less can sometimes achieve more.

Need Help Drawing the Line on AI Surveillance?

We help nonprofits build AI governance frameworks that protect beneficiaries, staff, and organizational trust while still leveraging AI for mission impact.