When AI Says No: Handling Algorithmic Denials in Service Delivery

Algorithmic systems are making or influencing decisions about who receives benefits, housing, services, and support, often with catastrophic results. For nonprofits serving vulnerable populations, understanding how these denials happen and building strong protections against them is an urgent ethical obligation.

Somewhere in your community, a person who qualifies for emergency food assistance is being denied because an algorithm decided their documentation was incomplete. A family eligible for housing support isn't getting it because a risk score placed them in the wrong priority tier. A client who needs mental health services can't access them because a triage system flagged their case as lower urgency.

Algorithmic denials in service delivery aren't hypothetical. They're happening at scale, and the people most affected are the ones who most need support: low-income individuals with thin digital footprints, immigrants with complex documentation situations, people with disabilities navigating inaccessible systems, communities that have been historically under-served and over-surveilled. The organizations meant to help them are often the last line of defense when automated systems fail.

The stakes for nonprofits are significant in multiple directions. Your organization may be using AI tools that are denying your clients services, even if you didn't intend that outcome. You may be absorbing the burden of clients wrongly denied by government or vendor systems your organization didn't choose. Or you may be considering AI tools for service delivery without fully understanding the denial risks they carry.

This article examines how algorithmic denials work, why they cause disproportionate harm to vulnerable populations, what the documented failures look like, and what governance frameworks actually protect against these outcomes. Understanding this territory is no longer optional for nonprofit leaders: according to a 2025 survey, 92% of nonprofits now use AI in some form, yet nearly half have no AI governance policy. That gap between adoption and accountability is where algorithmic harm thrives.

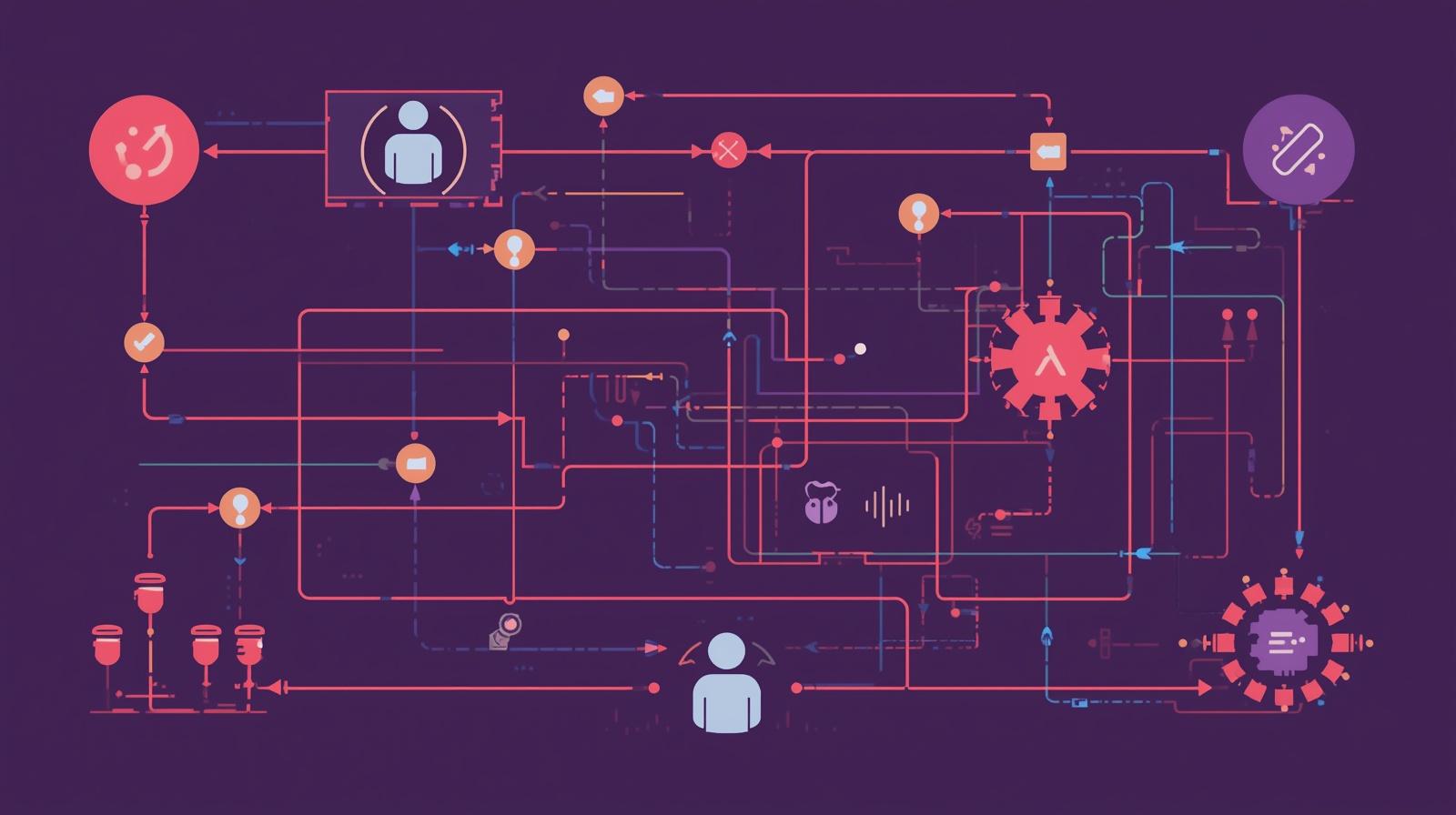

How Algorithmic Denials Happen

Algorithmic denials occur when automated systems make or heavily influence decisions that exclude people from services, benefits, or support they are entitled to or would benefit from. These aren't simply computer errors; they reflect specific design choices, data quality problems, and structural inequalities that get encoded into the logic of automated systems.

One fundamental cause is biased training data. When AI systems are trained on historical data that reflects past discrimination, they learn to replicate that discrimination. A system trained on historical housing assistance approval data will learn patterns from a period when certain neighborhoods or demographic groups faced systematic barriers. It then applies those patterns to new applicants, perpetuating the same exclusions under the guise of algorithmic objectivity.

Algorithmic exclusion is a distinct but related problem. Some people simply don't have the kind of digital data trail that algorithms need to assess them. Immigrants without long US residency histories, elderly individuals with limited digital activity, people who have lived outside formal financial and government systems, and communities in rural or low-connectivity areas may be invisible to algorithms that rely on credit histories, social media data, or government benefit records. Their invisibility reads as risk, not neutrality.

Documentation requirements create another pathway to exclusion. Algorithmic eligibility systems often require specific document types in specific formats. People who have the right to services but face barriers to obtaining documentation, those fleeing domestic violence who have left their ID behind, recently released incarcerated individuals who lack standard ID, people with disabilities that make navigating complex documentation requirements difficult, are systematically disadvantaged even when their underlying eligibility is clear.

Biased Training Data

Historical data encoding past discrimination is fed into AI systems, which then replicate those discriminatory patterns under the appearance of neutral, data-driven decision-making.

Algorithmic Exclusion

People with sparse digital footprints, immigrants, elderly individuals, and rural populations lack the data inputs algorithms need, causing them to be assessed as high-risk or ineligible.

Documentation Barriers

Rigid documentation requirements exclude people who have legitimate eligibility but face structural barriers to obtaining or presenting standard paperwork in required formats.

When Algorithmic Denials Go Catastrophically Wrong

The history of algorithmic systems in human services isn't speculative. There are well-documented cases where automated systems produced massive numbers of wrongful denials, and where the burden of correcting those failures fell heavily on nonprofits and legal aid organizations that had nothing to do with designing the systems.

Indiana's attempt to automate welfare services in the mid-2000s through a contract with IBM resulted in over a million benefits denials in the first three years, a 54% increase from the prior period. The automated system struggled with documentation that didn't fit its required formats and produced denials for procedural reasons even when underlying eligibility was clear. Nonprofit legal aid organizations were flooded with cases from people who had lost food assistance, Medicaid coverage, and other critical benefits. The state eventually cancelled the contract after years of litigation.

Michigan's unemployment fraud detection algorithm, implemented in 2013, accused approximately 48,000 people of fraud based on automated matching of records. A subsequent state audit found that 93% of those determinations were incorrect. People were required to repay benefits they had legitimately received, with interest and penalties, based on errors that took years to correct. Nonprofit legal services organizations shouldered much of the work of helping wrongly accused individuals navigate appeals.

These failures share a structural pattern: automated systems optimized for efficiency produced false positives at high rates; the cost of those errors fell disproportionately on vulnerable individuals; and the organizational burden of addressing the fallout landed on under-resourced nonprofits and legal aid organizations. The companies that built and sold the systems were largely insulated from the consequences.

This pattern isn't only historical. Contemporary examples include healthcare utilization management algorithms that deny treatments based on population-level data without accounting for individual circumstances; child welfare risk assessment tools that flag families in low-income neighborhoods for investigation at disproportionate rates; and housing allocation systems that use credit and rental history data in ways that systematically disadvantage people who have faced poverty or incarceration.

The Accountability Vacuum Problem

When algorithmic systems cause harm, responsibility diffuses across vendors, government agencies, and nonprofits. No single party feels accountable, yet the costs concentrate on the most vulnerable people and the organizations serving them.

- Technology vendors argue they built what was specified and that the government agency or nonprofit approved the design.

- Government agencies point to vendor responsibility for technical failures and to legal eligibility standards they are required to enforce.

- Nonprofits deployed the tools in good faith but lack the technical capacity to audit them and didn't build appeal processes into their contracts.

- Clients experience the denial, navigate complex appeal processes, and absorb the material harm of losing access to services they need.

What Nonprofits Can Actually Do About This

The governance gap in nonprofit AI use is real and consequential, but it's also addressable. The most effective protections against algorithmic denial don't require technical expertise. They require intentional organizational policies, robust human oversight, and a commitment to treating clients as the primary stakeholders in any AI deployment decision.

The starting point is a clear-eyed audit of what AI tools your organization currently uses and what decisions those tools influence. Most organizations are surprised to discover how many algorithmic systems they've adopted, often incrementally, without ever examining them collectively. Software that screens program applicants, tools that prioritize case assignments, platforms that determine which clients receive outreach, eligibility systems with automated verification requirements: all of these are making or influencing decisions that affect access to your services.

The mRelief model offers an instructive contrast to the harmful systems described above. mRelief built a SNAP eligibility screening tool with an explicit inclusion bias: the system is designed to maximize who might be eligible and generate as few false negatives as possible. Rather than screening people out, it screens people in. Since 2014, the organization has helped unlock over $1.4 billion in SNAP benefits for 4 million individuals. The design principle of inclusion rather than exclusion produced radically different outcomes than systems built around fraud prevention and cost reduction.

For organizations using AI in service delivery, the mRelief principle translates into a design question worth asking about every tool: is this system designed to help eligible people access services, or is it designed to screen out ineligible people? The former requires a fundamentally different approach to error tolerance. A false positive (allowing through someone who shouldn't qualify) has different costs than a false negative (excluding someone who should qualify). For nonprofit service organizations, where the mission is to serve people in need, erring toward inclusion is often the ethically correct choice.

Build Human Override Into Every System

Non-negotiable requirement for any AI in service delivery

Every AI system influencing service access decisions must have a clear, accessible mechanism for human review and override. This means:

- Staff are explicitly authorized to override AI recommendations with documented reasoning

- Clients denied services receive plain-language explanation of the denial basis and appeal rights

- Appeals are reviewed by humans, not by the same algorithm that issued the denial

- Override rates are tracked: high override rates signal a tool that shouldn't be trusted

Implement Equity Monitoring

Track outcomes by demographic to catch bias before it scales

Algorithmic bias is often invisible unless you actively look for it in disaggregated outcome data. Monthly equity monitoring should track:

- Service approval and denial rates by race, ethnicity, and disability status

- Appeal outcomes and override rates by demographic group

- Documentation rejection rates by immigrant status or housing situation

- Defined disparity thresholds that trigger mandatory review and corrective action

Building a Governance Framework That Actually Protects Clients

Effective AI governance for nonprofits serving vulnerable populations isn't primarily a technical challenge. It's a policy and culture challenge. The organizations that protect their clients most effectively from algorithmic harm are those that have made deliberate choices about values, built clear policies from those values, and created accountability mechanisms that don't rely on technical staff who may or may not exist.

A risk-tiering approach to AI tools is a practical starting point. Not all AI uses carry equal risk of client harm. Administrative tools that help with scheduling, content creation, or internal communication have minimal direct impact on service access. AI tools that screen clients for eligibility, determine priority order for services, or generate recommendations that affect resource allocation have high direct impact. These categories deserve fundamentally different levels of oversight.

For high-impact tools, vendor accountability must be built into procurement decisions and contracts. Before adopting any AI system that affects service access, organizations should require: documentation of bias testing and validation studies, audit rights that allow the nonprofit to examine outcome data disaggregated by demographics, clear contractual responsibility for identified bias issues, and the right to terminate based on equity performance. These requirements are increasingly standard in responsible AI procurement, and vendors who resist them should be treated with significant skepticism.

Client transparency is another essential element that most organizations handle poorly. When AI influences a service decision, clients should know it. This doesn't mean overwhelming people with technical explanations; it means plain-language disclosure: "Our process uses an automated assessment to help determine eligibility. You have the right to request a manual review of your case." This transparency is both ethically required and practically valuable: clients who understand that a human can review their case are more likely to exercise that right when they believe the system got it wrong.

AI Risk Tier Framework for Service Delivery

Apply different levels of oversight based on potential impact on clients

Green Tier: Low Client Impact

Administrative tools, content creation, scheduling, internal communication

Oversight: Standard vendor review, staff training on responsible use, periodic check-ins

Yellow Tier: Medium Client Impact

Outreach prioritization, resource recommendations, case management support that staff review before acting

Oversight: Documented human review before recommendations are acted on, quarterly equity monitoring, staff override authority

Red Tier: High Client Impact

Service eligibility determination, benefit access decisions, program placement, priority assignment affecting access

Oversight: Mandatory human review for all denials, bias audit before deployment and quarterly after, client appeal rights, vendor equity accountability in contract, leadership sign-off required

Community engagement in AI governance decisions is not optional for organizations serving vulnerable populations. The people most at risk from algorithmic denials, those with thin data trails, complex documentation situations, and histories of systemic exclusion, are also the people most likely to identify problems that an organization's staff won't notice from the inside. Building genuine feedback mechanisms, not surveys that never affect decisions but real forums where client concerns can trigger review, is a necessary element of ethical AI governance.

For organizations that lack internal technical capacity, partnerships with university researchers, technology nonprofits like Bayes Impact or Data Kind, or legal aid organizations that work on algorithmic accountability can provide both bias auditing support and policy development assistance. These partnerships are often available at low or no cost, particularly for organizations serving marginalized communities.

When You're Not the One Using the Algorithm

Many nonprofits encounter algorithmic denial not through their own AI deployments but through government systems that their clients navigate. Benefit eligibility determinations, housing waitlist algorithms, child welfare risk assessment tools, and public health service prioritization systems all use AI that nonprofits didn't design and don't control.

Even in this position, organizations have important options. Staff who work directly with clients need to understand how these external systems work, what their documented failure modes are, and what appeal rights clients have. This is fundamentally a training issue: case managers who know that a particular government system has documented bias for certain documentation types or demographic groups can help clients prepare applications that reduce the likelihood of wrongful denial and can support appeals when denials occur.

Tracking patterns in algorithmic denials from external systems also creates the evidence base for advocacy. If your organization consistently sees clients wrongly denied by a particular government algorithm, that pattern, systematically documented, can support legal challenges, regulatory complaints, or legislative advocacy for algorithm accountability. Organizations like the Electronic Privacy Information Center (EPIC), the Lawyers' Committee for Civil Rights Under Law, and state-level legal aid organizations have brought successful challenges to harmful algorithmic systems when supported by documented evidence of impact.

Building Your Organization's Algorithmic Advocacy Capacity

- Train frontline staff to identify and document patterns in algorithmic denials from external systems, including noting the demographic characteristics of affected clients

- Create standardized internal tracking for external algorithmic denials, including the system, the stated denial reason, and outcome of any appeal

- Build relationships with legal aid organizations that work on algorithmic accountability to share data and get support for complex appeals

- Participate in coalitions and sector networks that monitor AI use in government benefit systems and advocate for algorithmic accountability legislation

- Use your documented evidence of client harm to inform public comment periods when government agencies propose automated systems affecting your community

The Ethical Obligation Is Organizational, Not Technical

The 47% of nonprofits that use AI without any governance policy aren't making a technical oversight. They're making an ethical choice, perhaps without recognizing it as such. Every AI deployment without human override capacity, every system without equity monitoring, every tool adopted without community input is a choice to prioritize operational efficiency over the well-being of the people the organization serves.

The good news is that building genuine protections against algorithmic harm doesn't require technical staff you don't have. It requires clear values, deliberate policies, and the organizational will to hold vendors and systems accountable to those values. Free policy templates from NTEN, Fast Forward, and Whole Whale provide starting frameworks. Partnerships with technology nonprofits and legal aid organizations can fill technical gaps. Community engagement is always within reach.

What the organizations that get this right share is a foundational commitment: that their clients' access to services is more important than operational efficiency, and that any AI tool threatening that access will be constrained, corrected, or abandoned. When the mission is to serve people in need, that's the only acceptable position. For related perspectives, explore our guides on keeping humans central to AI decisions, responsible AI practices for nonprofits, and building AI ethics governance structures.

Build AI Governance That Protects Your Clients

We help nonprofits audit current AI use, develop equity monitoring frameworks, and build governance policies that keep vulnerable populations protected from algorithmic harm.