Reactive, Operational, Strategic: A Three-Stage AI Maturity Model for Nonprofits

The vast majority of nonprofits now use AI in some form, but only a small fraction see real impact. The difference is not which tools they use, it is how deeply AI is embedded in their work. This three-stage maturity model gives nonprofit leaders a way to diagnose where their organization sits today and a concrete path to the next stage.

When the 2026 Nonprofit AI Adoption Report from Virtuous and Fundraising.AI surveyed 346 organizations, it surfaced a number that has since reshaped sector conversations: 92 percent of nonprofits report using AI in some capacity, while only 7 percent say it has meaningfully expanded what their teams can accomplish. That gap is not a story about access to tools. Almost every nonprofit now has access to ChatGPT, Claude, Copilot, or a CRM with embedded AI features. The gap is about depth, about whether AI is a personal productivity hack for individual staff or a system that has changed how the organization actually operates.

Inside that gap, the report identifies three distinct stages of adoption that map remarkably well to what we have observed working with nonprofit leaders across program, fundraising, and operations functions. Most nonprofits are in the first stage, where AI is reactive and individual. A smaller group has moved to the second stage, where AI is operational across team workflows. A very small group has reached the third stage, where AI is embedded into goals, budgets, and performance indicators in a way that changes outcomes, not just outputs.

This article presents that three-stage maturity model in detail. For each stage we describe the signals that tell you an organization is there, the value the stage produces, and the limits that prevent it from going further on its own. We then describe the specific changes in process, governance, and leadership that move a nonprofit from one stage to the next. The goal is not to label organizations or rank them against each other. The goal is to give nonprofit leaders a diagnostic lens so they can place their organization honestly, identify the highest-leverage next step, and stop spending time on activities that look like progress but are actually treadmill running.

A few caveats before we begin. Maturity is not uniform across an organization. A development team can be operational while finance is still reactive. Maturity also does not progress automatically with time or budget. Many nonprofits have been using AI heavily for two years and remain firmly in stage one. The transitions between stages are the result of deliberate choices, and they are mostly about process and governance rather than technology. Finally, the goal of this model is not to push every nonprofit to stage three. For some organizations, well-run stage two is the right destination given size, mission, and risk profile. The point is to know where you are and to move with intention.

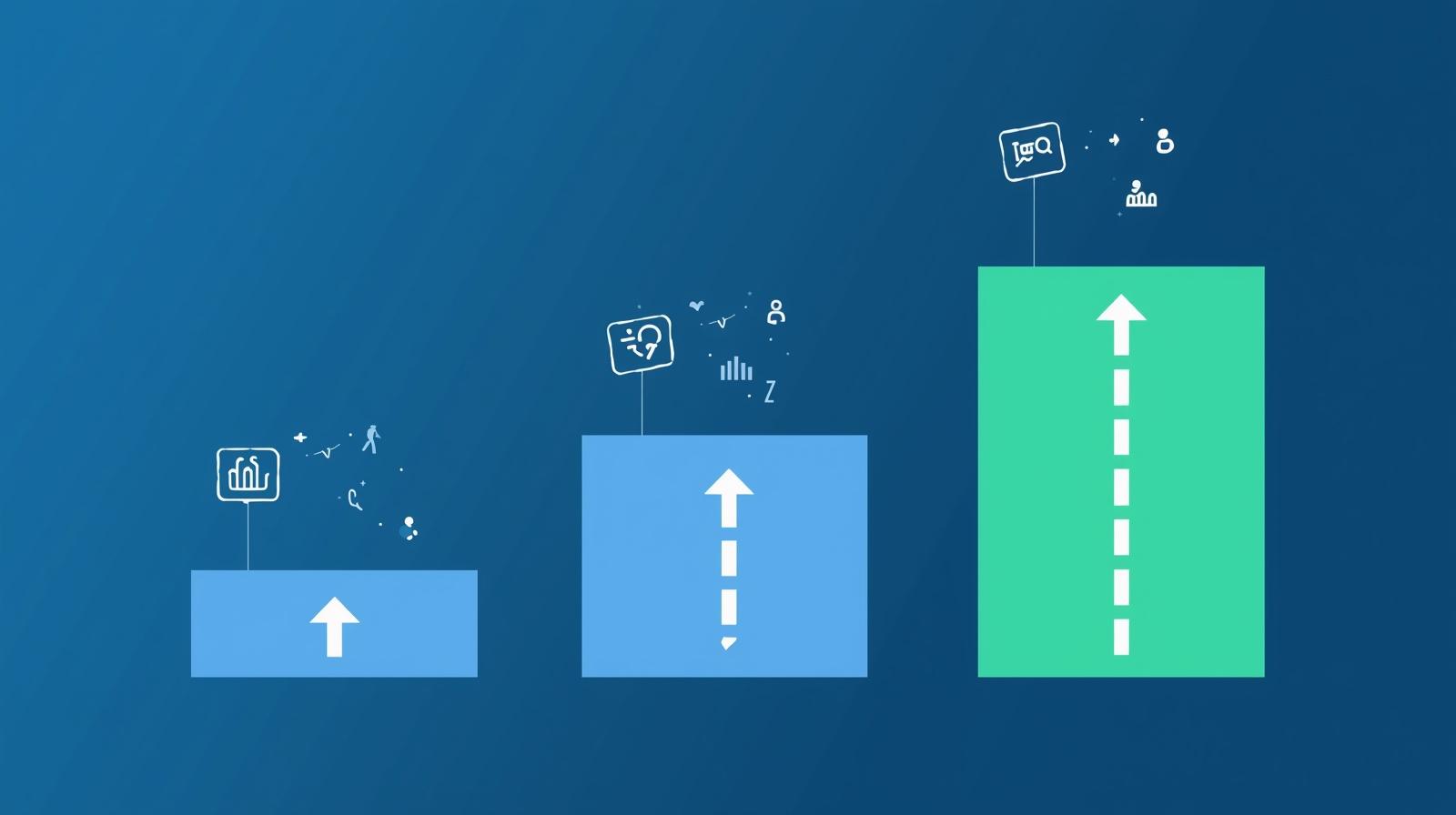

The Three Stages at a Glance

Before exploring each stage in depth, here is the overall shape of the model. Each stage describes how AI is used, who uses it, and what the organization gets in return. The progression is not just about doing more AI, it is about doing AI in a fundamentally different way.

Stage 1: Reactive

Individual, ad hoc use

Staff use AI tools when they remember to, for one-off tasks like drafting an email or summarizing a document. Use varies wildly by person. Results stay in personal accounts. The organization gets faster individual outputs but no compounding value.

Stage 2: Operational

Team-level workflows

Specific workflows have been documented, standardized, and shared. Whole teams run AI through these workflows the same way. The organization gets predictable productivity gains in defined functions and a shared baseline of capability.

Stage 3: Strategic

Embedded in goals and budgets

AI is part of how the organization sets goals, allocates resources, and measures success. Leadership tracks AI-enabled outcomes the same way it tracks any other strategic priority. The organization changes what is possible, not just how fast it does what it already did.

The report's headline numbers map directly onto these stages. Roughly 65 percent of nonprofits are in stage one, 18 percent in stage two, and 7 percent in stage three. The remaining organizations report no AI use. That distribution explains the apparent paradox of high adoption and low impact: most adoption is happening at a stage that, by design, cannot produce organizational-level impact. Individual productivity gains do not aggregate into mission outcomes when there is no system to capture and compound them.

Stage 1: Reactive AI Use

The reactive stage is where almost every nonprofit's AI journey begins, and where most of them stay. It is characterized by individual staff using AI tools as personal productivity aids, with no coordination, no shared standards, and no organizational visibility. To read more about moving out of this stage, see our earlier piece on moving from reactive to operational AI use.

Signals you are in stage one

- Staff use ChatGPT, Claude, or similar tools through personal or unmanaged accounts.

- If you asked five people on the same team how they use AI, you would get five different answers.

- Prompts, outputs, and lessons stay in private chats and personal documents.

- There is no written policy on what data can or cannot go into AI tools, or the policy exists but no one is following it.

- Leadership cannot answer questions like "where is AI saving us the most time" with anything but anecdotes.

- AI use shows up in informal conversation but never in formal goal-setting, performance reviews, or budgets.

What stage one produces, and what it cannot

Reactive AI use is not worthless. Individual staff genuinely save time. A communications associate drafts a newsletter faster. A program officer summarizes a 40-page report in five minutes. A development director gets a usable first draft of a grant proposal. These wins are real and they compound for the individual.

What stage one cannot produce is organizational capability. Because each staff member has discovered their own techniques, the organization cannot move someone from one role to another and expect them to be productive with AI on day one. When the staff member leaves, their AI-enabled productivity leaves with them. When a board member asks how the organization is using AI, the executive director must rely on stories rather than data. And because no one is comparing outputs across users, the organization cannot tell whether its AI use is actually good or whether it has normalized mediocre work.

The hidden cost of staying in stage one is opportunity cost. Every month an organization stays here, it is paying the full price of AI tooling and disruption without capturing the system-level returns. A common pattern is the executive director who hears about AI from every direction, encourages the team to use it, watches a year go by, and then asks why nothing seems to have changed at the organizational level. The answer is almost always that the organization stayed reactive.

Why nonprofits get stuck here

- No clear owner. AI is everyone's job, which means it is no one's job. Without a named person or small team responsible for moving AI work forward, individual gains never get systematized.

- Productivity feels like enough. Because individuals are seeing real time savings, leadership assumes the organization is making progress and does not see the need to build infrastructure.

- The shift to stage two requires effort with no immediate reward. Documenting workflows, standardizing prompts, and building shared infrastructure feels like overhead to a team that is already getting personal value from AI.

- Governance fears block sharing. Without clear data and privacy guidelines, staff hesitate to share prompts that include real client or donor information, so each person reinvents the same workflow privately.

Stage 2: Operational AI Use

The operational stage is the first place where AI starts to look like infrastructure rather than tooling. A team identifies a recurring workflow, agrees on how AI will fit into it, documents the approach, and runs the workflow the same way across the team. About 18 percent of nonprofits report being here in at least some functions. The defining feature is not how much AI is used, but that AI use has been collectively decided rather than individually invented.

Signals you are in stage two

- At least one workflow has a documented "this is how we do it with AI" standard that the whole team follows.

- Shared prompt libraries, templates, or playbooks exist in a place the team can find them.

- A new hire joining a team can be productive with AI in days, not months, because the workflows are written down.

- Someone owns the workflows, reviews them periodically, and updates them when better approaches emerge.

- There is a written AI use policy that staff actually know about and that covers what data can be used, what cannot, and how to handle edge cases.

- The organization can name three to five workflows where AI is part of the standard process, not an optional add-on.

What stage two produces, and what it cannot

Operational AI use produces predictable productivity gains in defined functions. A development team with a documented grant-research workflow can process twice as many prospects as before. A program team with a standardized intake summary workflow gets consistent notes regardless of who is on duty. A communications team with a shared content-repurposing approach can turn one piece of long-form content into a week's worth of channel-appropriate posts. Importantly, these gains survive staff turnover because the knowledge lives in the workflow rather than in the individual.

What stage two cannot produce on its own is changed outcomes. Faster grant research does not necessarily mean more grants won. Faster intake notes do not necessarily mean better client outcomes. Stage two delivers efficiency, but efficiency in a vacuum just means the same work happens faster. To turn efficiency into impact requires the leap to stage three, where AI capability becomes part of how the organization sets its goals in the first place. See our piece on the small fraction of nonprofits with documented, repeatable AI workflows for a closer look at what stage two looks like in practice.

How to move from stage one to stage two

The four moves that unlock operational AI

- Pick one workflow, not ten. Choose one recurring task that the whole team does. Grant research, donor research, meeting summaries, intake notes, social media drafts, board memos. Resist the urge to systematize everything at once.

- Name an owner. Pick a person, not a committee, to own the workflow. They are responsible for documenting it, training the team, and updating it as the work evolves.

- Document, then standardize. Write down the workflow step by step, including the exact prompts, the tools used, and the review criteria. Test it with two or three people. Refine. Then make it the official way the team does the work.

- Put governance in writing. A two-page AI use policy beats a 20-page one no one reads. Cover what data can be used, where, by whom, and what to do when something feels off.

The team that does this work is often a small group of internal AI champions, not the IT department. Champions are the people who already love AI, who are already running unsanctioned experiments, and who can translate between the technology and the day-to-day work. Giving them time, authority, and a small budget is one of the highest-return moves a nonprofit can make.

Stage 3: Strategic AI Integration

Stage three is where the small group of nonprofits seeing transformational impact actually live. About 7 percent of organizations report being here. At this stage, AI is not a tool layered onto existing work, it is part of how the organization decides what work to do, how much of it to do, and how to measure success.

Signals you are in stage three

- The annual strategic plan or operating plan includes goals that explicitly depend on AI capability, not just AI usage.

- Departments propose budgets that account for AI tooling, training, and oversight as line items rather than absorbing them into general operations.

- Performance reviews and team scorecards include outcomes that AI enabled, such as "donors retained per development staff member" rather than just "donors retained."

- The board hears about AI not as a project update but as part of the organization's overall capability narrative.

- Leadership can answer the question "what would we stop doing if AI broke tomorrow" with specifics, because AI is genuinely load-bearing.

- The organization is making bets that would have been impossible without AI, such as serving more clients per staff member, reaching new geographies with the same team, or running programs the previous capacity made impossible.

What stage three produces

The defining output of stage three is changed possibility. A small development team manages a portfolio that would have required three times the staff a few years ago. A program organization runs intake in five languages without hiring multilingual staff for each. A research-driven nonprofit publishes analyses on the same timelines that previously belonged to organizations five times its size. None of this is AI making old work faster. It is AI making new work possible.

Stage three also produces strategic clarity. When AI is embedded in goals and budgets, leadership stops asking "should we be doing more with AI" and starts asking the much better question of "given our AI capability, what is the next bet worth making." That shift from defense to offense is, more than any tooling decision, what separates the 7 percent from the 18 percent. For a closer look at what this small minority does differently, see our piece on why so few nonprofits see real impact from AI.

How to move from stage two to stage three

The five moves that unlock strategic AI

- Make AI a board-level conversation. Not as a topic to be briefed on, but as a lens for evaluating strategy. The board should ask "what is AI changing about how we should pursue this goal" before approving any major plan.

- Bake AI into the strategic plan. If your strategic plan does not name AI as a means to specific goals, the organization will keep treating it as a side project. Name the goals it should help reach and the metrics that will tell you it is working.

- Move AI into the budget. Stage three organizations stop hiding AI costs in software lines. They budget for tooling, training, evaluation, and oversight as named line items, which lets leadership manage the spend the way they manage any other strategic investment.

- Define AI-enabled outcomes, not AI-enabled outputs. Outputs are how many emails got drafted with AI. Outcomes are how many donor relationships moved forward, how many clients got served, how many programs got launched. Stage three measures the latter.

- Build the muscle of disciplined experimentation. Strategic AI organizations run small, time-boxed experiments to test whether new AI capabilities are worth investing in, with explicit success criteria and an honest willingness to stop something that is not working.

How to Use the Model in Your Organization

A maturity model is only useful if it changes what an organization does. Here is a practical approach for putting the three-stage framework to work, whether you are an executive director, board member, or department head.

Step 1: Diagnose honestly

Pick three to five functions in your organization, such as development, programs, finance, communications, and operations. For each, walk through the signals listed earlier and place the function at stage one, stage two, or stage three. Do this honestly, not aspirationally. Most nonprofits discover that they are at stage one in every function, with one or two pockets of stage two. That is normal and useful information.

Step 2: Pick one function to advance

Resist the temptation to advance everything at once. Choose one function where the next stage is achievable in a reasonable timeframe and where the impact would matter. Often the right pick is the function with the most ad hoc AI use already happening, because the demand is there and the team is willing.

Step 3: Name an owner and a deadline

"We will move development from stage one to stage two by the end of Q3" is the kind of commitment that produces results. "We need to use AI more strategically" is the kind that does not. Pair the named function with a named owner and a date.

Step 4: Build the artifacts that define the next stage

Each stage has tangible artifacts. Stage two needs a documented workflow, a shared prompt library, and a written policy. Stage three needs goals in the strategic plan, AI line items in the budget, and AI-enabled outcomes in the scorecards. If those artifacts do not exist at the end of your timeframe, you have not actually advanced.

Step 5: Revisit the diagnosis every six months

AI capability shifts quickly, and so does your organization. A function that was solidly stage two a year ago may have drifted back to stage one because the owner left or the documentation was not maintained. Treat the diagnosis as a recurring check, not a one-time exercise.

Common Misuses of the Model

Like any framework, the three-stage model can be misused in ways that defeat its purpose. A few patterns to watch for.

Treating maturity as a uniform organizational score

Nonprofits rarely have a single maturity level. Development might be operational while finance is reactive and programs are somewhere in between. Trying to assign one number to the whole organization hides the differences that actually matter for action.

Pushing to stage three regardless of organizational fit

For some nonprofits, stage three is the wrong target. A small organization with three staff and a narrow mission may be best served by a well-run stage two in one function and nothing else. Forcing strategic integration onto an organization that is not ready burns time and trust.

Confusing tool adoption with maturity

Buying enterprise ChatGPT or rolling out Microsoft Copilot does not move you up the stages. Procurement is a stage-one activity unless it is paired with workflow design, governance, and goal-setting work. Many organizations spend significantly on tools and discover a year later that they are still firmly in stage one.

Skipping stages

Trying to move from stage one to stage three without consolidating stage two is a common and expensive mistake. The artifacts of stage two, documented workflows and shared standards, are what make strategic integration possible. Without them, stage three is theater.

Conclusion

The three-stage maturity model exists because the headline numbers about nonprofit AI adoption hide more than they reveal. Saying that 92 percent of nonprofits use AI is true but unhelpful, because that statistic groups together organizations doing fundamentally different things. The reactive nonprofit is using AI in a way that almost cannot, by design, produce organizational impact. The operational nonprofit is using AI in a way that produces predictable efficiency. The strategic nonprofit is using AI in a way that changes what the organization can attempt at all.

The good news is that the moves required to advance through the stages are not technical. They are organizational. Pick a workflow. Name an owner. Document the approach. Write the policy. Put AI into the strategic plan. Put AI into the budget. Measure AI-enabled outcomes rather than AI-enabled outputs. None of these moves require deep technical expertise. They require leadership willingness to treat AI as a system to be built rather than a tool to be used.

The further good news is that nonprofits do not need to be in the 7 percent to see real value. A well-executed stage two beats a chaotic stage three every time. Most organizations should aim for stage two in their two or three most important functions before they think about stage three at all. The point is to move with intention, to know what stage you are in, to know what stage you want to reach, and to do the specific work that gets you there.

Two years from now, the gap between the 7 percent and the 92 percent will widen, not narrow. Organizations that have built operational and strategic AI capability will be running programs and pursuing missions that simply are not feasible without it. Organizations that stayed reactive will still be saving individuals a few hours a week while wondering why nothing seems to have changed. The maturity model is not a ranking. It is a map. The question is whether your organization is ready to use it.

Ready to Move Up the AI Maturity Curve?

We help nonprofits diagnose where they sit on the maturity curve and build the workflows, governance, and strategy artifacts that move them to the next stage. Let's map your path forward.