From Pilot to Production: How to Scale AI Projects Beyond Initial Experiments

Every nonprofit AI success story begins the same way: a motivated team, a carefully scoped pilot, early results that exceed expectations, and a growing sense that this could transform how the organization works. Then, for most organizations, the story stalls. The pilot succeeds, and nothing happens at scale. Understanding why, and what to do differently, is one of the most consequential questions in nonprofit AI strategy today.

The statistics describing the pilot-to-production gap are striking. MIT research published in 2025 found that the vast majority of enterprise AI pilots fail to deliver measurable returns. The average enterprise scrapped nearly half of its AI pilots before reaching production. Only a small fraction of organizations report successfully moving AI from testing into real-world implementation at scale. For nonprofits, the picture is often worse, compounded by limited budgets, stretched staff, and funders who rarely cover the sustained technology investment that scaling requires.

This is not a technology problem. The models work. The tools have become more capable and accessible than at any point in history. The failure is organizational, and it is almost entirely predictable. AI pilots succeed because they are bounded, supervised, and resourced with unusual attention. The motivated champion who drove the pilot is present every day. The dataset was carefully curated. Users were selected for enthusiasm. Those conditions almost never transfer automatically to broader deployment.

What distinguishes the organizations that successfully scale AI is not superior technology or larger budgets. According to BCG's comprehensive AI Radar 2025 research, the difference comes down to how organizations allocate their scaling effort: roughly 70% toward people, processes, and culture, 20% toward data and technology infrastructure, and only 10% toward algorithms and models themselves. Most organizations get this ratio exactly backwards, investing heavily in the technical components while under-investing in the organizational transformation that determines whether any of it sticks.

This article is a practical guide for nonprofit leaders, program directors, and operations staff who have run successful AI pilots and want to translate that success into lasting organizational capability. We'll examine why pilots fail to scale, how to assess whether a pilot is genuinely ready for production, what infrastructure and governance a production system actually requires, and how to manage the human dimensions of scaling in ways that build rather than exhaust organizational trust.

Why AI Pilots Succeed But Fail to Scale

Understanding the failure modes is the prerequisite for avoiding them. Pilots and production environments are fundamentally different contexts, and what makes pilots succeed often actively works against production scaling.

The Controlled Conditions Problem

AI pilots typically operate in artificially favorable conditions. Data is manually cleaned for the pilot period. Users are selected because they're enthusiastic early adopters. The champion is closely supervising the process and can intervene when things go wrong. These conditions rarely survive contact with production reality.

Production systems serve all users, not just willing volunteers. They operate with data that is messy, inconsistent, and drawn from multiple sources rather than a curated dataset. They run without a dedicated supervisor ready to troubleshoot every edge case. The same AI that performed impressively under controlled conditions can degrade significantly when these supports are removed.

The Funding Paradox

Nonprofits face a particular version of the scaling challenge that enterprise organizations do not. Research from Candid found that the vast majority of nonprofits developing AI solutions report that additional funding is their top need for scaling, but funders routinely require demonstrated impact before they invest. Organizations need capital to prove impact but need proven impact to unlock capital.

Most foundation and government grant programs fund innovative projects, not operational infrastructure. AI pilots often attract grant support. The ongoing costs of maintaining, monitoring, and improving production AI systems are far harder to fund through traditional sources. Organizations that succeed at scaling treat AI as operational infrastructure from the beginning and build that framing into their grant narratives and budget requests.

The Governance Vacuum

The vast majority of nonprofits use AI, but only a small fraction have formal policies governing its use. Pilots can operate informally under the oversight of a champion; production cannot. Production AI requires defined ownership, clear accountability for when things go wrong, documented policies for data handling and model updates, and regular audit processes.

Organizations that attempt to scale without this governance infrastructure often encounter crises that erode organizational trust in AI more broadly. A model that produces an inaccurate output for a volunteer user during a pilot is a learning experience. The same model producing inaccurate outputs at scale, with no clear ownership or correction process, becomes an organizational incident.

Spreading Too Thin

BCG's analysis of AI maturity across organizations found that organizations focusing on a small number of priority use cases generate more than twice the ROI of those spreading effort across many. Many nonprofits, excited by AI's potential, attempt to scale multiple pilots simultaneously. This dilutes the organizational attention, budget, and change management capacity needed to move any single pilot to a stable production system. Scaling AI successfully requires saying no to many things that seem promising in order to say yes deeply to a few.

Assessing Whether Your Pilot Is Ready for Production

Not every successful pilot should be scaled, and not every pilot that worked in controlled conditions will survive production. Before committing organizational resources to scaling, a structured readiness assessment helps distinguish between pilots that are genuinely ready and those that need further development.

Business and Mission Readiness

- Success metrics were defined before the pilot and achieved consistently for 60-90+ days

- Metrics are tied to mission outcomes, not just technical performance

- At least one organizational leader beyond the original champion is actively advocating for the project

- Pilot users report genuine workflow improvement, not just novelty interest

- The budget for production deployment exists as a formal line item

Data and Technical Readiness

- Data sources are documented and accessible beyond the pilot's curated dataset

- A data governance baseline exists, even if not yet mature

- Integration pathways with operational systems have been evaluated

- Security and compliance requirements for the use case are documented

- Monitoring approach for ongoing model performance has been designed

Organizational Readiness

- A change impact assessment has been completed for affected teams

- Change champions have been identified in each affected department

- Staff training has been designed before rollout, not planned for after

- Leadership has communicated honestly about how roles will change

- Channels for staff feedback and concern reporting have been established

Governance Readiness

- Clear ownership has been defined for the production system

- A formal AI policy (or at least draft policy) is in place

- Explicit kill/scale criteria have been defined in advance

- An audit cadence for ongoing review of AI performance and bias has been established

- Incident response procedures for AI failures exist

Organizations frequently discover through this assessment that they are ready on the technical and business dimensions but not on the governance and organizational dimensions, or vice versa. Rather than treating readiness as a binary pass/fail, use it to identify the specific gaps that need to close before scaling. Most organizations need one to three months of focused preparation to address readiness gaps before a pilot is genuinely ready for broader deployment.

What Production AI Actually Requires

The gap between a pilot environment and a production environment is far larger than most organizations anticipate. Understanding what production actually demands helps organizations make realistic commitments and plan appropriately.

Data Infrastructure at Scale

From curated snapshots to real-time pipelines

Pilots typically operate on a manually cleaned, one-time snapshot of data. Production systems must pull from multiple, often inconsistent sources in real or near-real time. This requires data pipelines, ETL processes, and integration with operational systems that simply do not exist in most pilot environments. Data quality governance, which determines how data inconsistencies are handled and who is responsible for resolving them, becomes essential and ongoing rather than a one-time setup task.

For nonprofits, this often means connecting AI systems to donor management platforms, case management systems, and grant tracking databases, each with their own data structures, access controls, and quality characteristics. The data architecture required for production AI is itself a significant project, often more complex than the AI model selection and training.

- Automated data pipelines replacing manual dataset preparation

- Data quality monitoring with defined remediation processes

- Integration with operational systems rather than isolated datasets

- Data lineage documentation tracking where each data element comes from

MLOps: The Operational Foundation

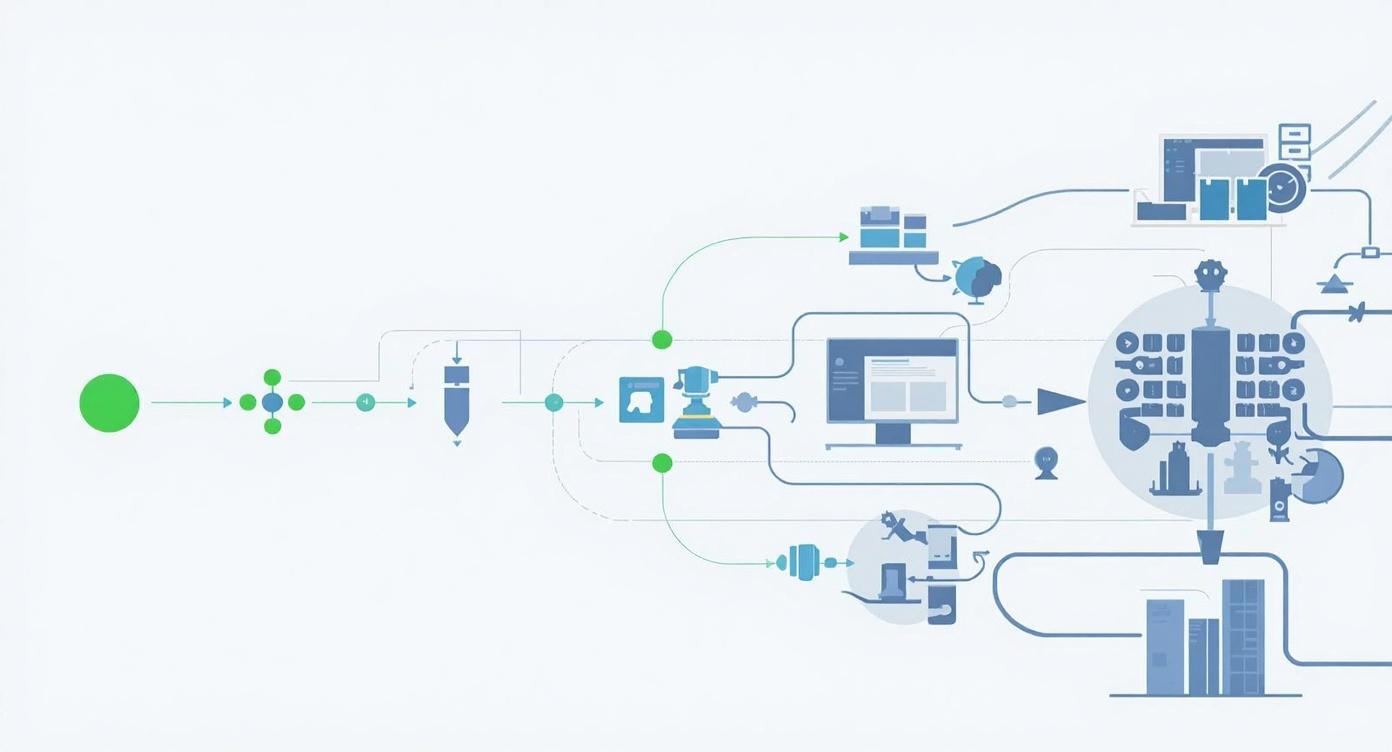

Automating the model lifecycle from training to monitoring

Machine Learning Operations, commonly called MLOps, refers to the practices and tooling that operationalize AI models in production. In a pilot, a data scientist or technically capable staff member can manually retrain the model, evaluate performance, and deploy updates. At production scale, these processes need to be automated, documented, and reliable enough to operate without constant expert intervention.

For nonprofits using off-the-shelf AI tools rather than custom models, MLOps is less about model training and more about process: monitoring the tool's outputs for quality, establishing protocols for when and how tool settings are adjusted, and maintaining documentation of how the system operates so that staff turnover does not create knowledge gaps.

Model drift is one of the most underappreciated production challenges. AI models often degrade over time as the real-world data they encounter diverges from what they were trained on. A donor engagement model trained on 2024 behavior may perform less well as donor behaviors shift. Automated monitoring that alerts when performance falls below defined thresholds prevents this gradual degradation from becoming a crisis.

Scale and Availability Requirements

From supervised experiments to always-on infrastructure

Pilot AI typically serves a small group of users in controlled hours. Production AI often needs to be available continuously, handle variable load, and recover from failures without requiring manual intervention. The compute infrastructure that is adequate for ten pilot users becomes a bottleneck for hundreds. The manual restart procedure acceptable in a pilot becomes unacceptable when frontline staff are blocked from their work.

Cloud platforms from Microsoft Azure, Google Cloud, and AWS all offer nonprofit discount programs and provide the elastic compute, redundancy, and availability guarantees that production systems require. For organizations currently running pilot AI on a single server or developer machine, migrating to cloud infrastructure is typically one of the first steps in production preparation. Organizations planning for 3-5x the pilot costs when scaling to full production deployment will be better positioned than those who underestimate this transition.

Managing the Human Side of Scaling

The 70% of scaling effort that BCG's research attributes to people, processes, and culture is where most organizations underinvest. Technology scaling is complicated; organizational change is harder. Building the human infrastructure for AI at scale requires sustained leadership attention, honest communication, and investment in capabilities that do not show up in technical metrics.

Building Your AI Champion Network

The single most effective change management intervention for AI scaling is developing a distributed network of AI champions across your organization. These are not necessarily the most technically capable staff. They are the people who have experienced genuine value from AI tools, understand the change it represents for their colleagues, and are respected enough within their teams to demonstrate rather than mandate adoption.

Unlike the pilot champion model where one person drives adoption, production scaling requires champions at multiple levels and in multiple departments. A donor database administrator who champions AI for donor research, a program coordinator who champions AI for case documentation, and a finance director who champions AI for budget analysis collectively create adoption momentum that no single leader could sustain alone. For more on building this kind of distributed leadership, the AI Champions 2.0 framework offers a detailed approach.

Training That Actually Drives Adoption

Most organizations over-invest in visible training activities (workshops, webinars, and information sessions) and under-invest in the adoption support that actually changes behavior. McKinsey's 2025 analysis of AI upskilling programs found that organizations treating AI reskilling as a multi-month journey with coaching and practice achieve far better adoption than those running one-time training events.

For nonprofits with constrained training budgets, prioritizing ongoing coaching and practice over large initial training events is both more effective and often more affordable. Practical resources available at no cost include Anthropic's AI Fluency for Nonprofits course, Microsoft's Introduction to AI Skills for Nonprofits through Microsoft Learn, and NTEN's AI Resource Hub. These can supplement internal training without adding significant cost.

Role-differentiated training also matters. A development director and a program case manager need very different AI capabilities. Developing role-specific training tracks that address the actual use cases each staff function will encounter is more effective than generic AI literacy training applied uniformly across the organization.

- Plan training as a multi-month journey, not a one-time event

- Develop role-specific training tracks for each department's actual use cases

- Embed AI champions as ongoing coaches alongside formal training programs

- Create communities of practice where staff share prompts and workflows

Communicating Honestly About Role Changes

The greatest source of resistance to AI adoption is anxiety about job security, and that anxiety intensifies during scaling when it becomes clear that AI will not remain a small experiment but will touch many more roles. Organizations that attempt to sidestep this concern with reassurances that AI "won't replace anyone" often find their credibility undermined when reality proves more complicated.

Honest communication about role evolution, which acknowledges that some tasks will change or be automated while clearly articulating how freed-up time will be reinvested in mission-critical human work, builds more lasting trust than avoidance. Staff who understand specifically how their role will change, what new capabilities they will need, and how leadership will support that transition are far more likely to engage constructively with AI scaling than those left to imagine the worst. The article on overcoming AI resistance offers additional frameworks for this conversation.

A Phased Approach to Scaling

Successful AI scaling rarely happens in a single transition from pilot to full production. A phased approach, with defined checkpoints and explicit criteria for proceeding at each stage, reduces risk, enables learning, and creates opportunities to course-correct before problems become crises.

1Controlled Expansion (Months 1-2)

Expand from the pilot cohort to a broader but still defined group, typically 3-5x the original pilot users across a single department or function. This stage tests whether pilot success generalizes beyond the most enthusiastic early adopters while maintaining enough oversight to catch and correct problems quickly. Shadow deployment, running the AI system in parallel with existing processes rather than replacing them, is particularly valuable at this stage.

- Expand user base to 3-5x pilot size within one department

- Run shadow deployment: AI and existing process in parallel

- Establish baseline production metrics before cutting over

- Collect structured feedback from new users weekly

2Departmental Production (Months 3-5)

With shadow deployment evidence confirming that production performance matches pilot performance, transition to full production within the initial department and begin evaluating the next deployment area. This stage focuses on stabilizing operations, addressing the edge cases that emerge at scale, and building the documentation and support infrastructure that enables sustainable ongoing operation.

- Full cutover in initial department with formal incident response ready

- Document edge cases and resolution procedures as they emerge

- Begin readiness assessment for next deployment area

- Establish automated monitoring and alerting for key metrics

3Organizational Scaling (Months 6-12)

Having demonstrated production stability in one area, apply the documented processes and infrastructure to additional departments and use cases. This stage should be faster than the first production deployment because the foundational infrastructure, governance structures, and organizational change processes have been established. The Cisco AI Readiness Index 2025 found that the typical timeline for moving AI from pilot to production runs seven to twelve months, and organizations that try to compress this significantly often encounter preventable failures.

- Apply established infrastructure and processes to additional use cases

- Develop reusable component libraries that accelerate future deployments

- Build organizational AI governance into standard operating procedures

- Document lessons learned and update your AI strategy accordingly

Maintaining Quality at Scale

One of the most common production failures is quality degradation that happens gradually rather than suddenly. The model that performed excellently in the pilot quietly becomes less accurate, less useful, or more prone to errors as real-world conditions evolve. Active quality management prevents pilot success from eroding into production mediocrity.

Defining Metrics Before You Scale

A major reason AI pilots do not translate into production value is that they were never tied to clear organizational objectives with measurable outcomes. If pilot success is measured in academic metrics like model accuracy while production success is measured in workflow efficiency or mission outcomes, the two stages never connect. Define mission-relevant success metrics before the pilot begins, track them throughout, and make production scaling decisions based on those same metrics rather than technical performance alone.

Separately from mission metrics, establish technical monitoring that alerts when model performance falls below defined thresholds. This includes accuracy for classification tasks, relevance for generation tasks, latency for time-sensitive applications, and error rates for integration points. Automated monitoring that flags anomalies without requiring manual review is essential for production systems that need to scale beyond what any individual can oversee.

- Connect AI metrics to specific mission outcomes that leadership tracks

- Establish automated technical monitoring with defined alert thresholds

- Schedule quarterly reviews to assess whether metrics remain appropriate

- Define explicit kill criteria: at what point would you scale back or stop?

Handling Failure Well

Production AI will have failures. Models will produce errors. Integrations will break. Users will encounter situations the system was not designed to handle. The question is not whether failures will occur but whether the organization has built the capacity to detect them quickly, respond effectively, and learn from them without losing organizational confidence in the overall AI strategy.

Organizations that treat AI failures as learning assets rather than embarrassments iterate faster and build more resilient systems. Short iteration cycles of two to four weeks rather than large quarterly releases reduce the cost of any individual failure. Post-mortems focused on systemic factors rather than individual accountability produce actionable learning without creating the blame culture that leads people to hide problems until they become crises.

Having a documented rollback plan, a clear communication process for when users encounter AI errors, and defined escalation paths for different categories of failure means that production incidents are managed rather than reactive crises. This operational maturity is what distinguishes organizations that sustain AI capabilities at scale from those that abandon them after the first significant failure.

Budget Planning for Sustainable AI

Production AI is infrastructure, not a project. Treating it as a time-limited initiative with a completion date is one of the most common structural failures in AI scaling. Sustainable AI capability requires ongoing budget allocation for subscriptions, infrastructure, monitoring, staff training, and governance, and this must be built into the organization's financial planning from the beginning.

What Production AI Actually Costs

The visible costs of production AI, subscription fees and API charges, are typically the smallest portion of total investment. For major AI platforms, nonprofit pricing makes the subscription costs manageable: Microsoft 365 Copilot at $25.50 per user per month for eligible nonprofits, OpenAI ChatGPT at $8 per user per month (annual), and Anthropic Claude at up to 75% discount on Team and Enterprise plans for nonprofits.

The less visible costs are often larger. Integration and customization connecting AI tools to existing systems requires staff time or contractor cost that organizations frequently underestimate. Data infrastructure investment, cleaning, organizing, and maintaining the data that AI depends on, represents ongoing operational expense rather than one-time setup. Training and change management often cost two to three times the technology investment for successful adoption. Ongoing monitoring and governance requires staff time for regular auditing and quality review.

Organizations that plan for 3-5x pilot costs when scaling to full production deployment will be better positioned than those who budget based on pilot costs alone. Connecting AI investment to demonstrable mission outcomes is also essential for convincing funders and boards to support ongoing operational funding rather than just innovative project grants.

Moving from Experiment to Organizational Capability

The organizations that succeed at scaling AI share a perspective that distinguishes them from those stuck in permanent pilot mode: they treat AI as an organizational capability to be developed rather than a series of projects to be completed. This perspective shapes how they resource AI initiatives, how they measure success, how they communicate with staff, and how they plan for the long term.

Developing organizational AI capability means building the data infrastructure, governance frameworks, and human skills that enable any specific AI application to succeed. It means having leaders who can evaluate AI tools critically, staff who know how to collaborate productively with AI systems, and processes that incorporate AI outputs while maintaining appropriate human judgment for decisions that require it. This foundation, once built, makes every subsequent AI deployment faster and more successful than the last.

The path from pilot to production is not a straight line. There will be setbacks, iterations, and moments when scaling a specific pilot is the wrong decision because the organizational conditions are not yet right. That is not failure; that is learning. The organizations that build lasting AI capabilities are those that approach each setback with curiosity about what it reveals rather than defensiveness about what it costs.

For additional guidance on the organizational side of AI adoption, the articles on managing AI resistance and building AI champions address the human dynamics that make or break production deployment. For the strategic framing that should precede any scaling commitment, AI strategic planning for nonprofits offers a structured approach to connecting AI investments to mission outcomes.

Ready to Move Beyond the Pilot Stage?

We help nonprofits build the organizational infrastructure, governance frameworks, and human capabilities needed to scale AI from promising experiment to lasting impact. Connect with our team to explore what it would take to make your AI work truly sustainable.